The Personalization of the Outdoors

We’ve grown up seeing the profound impact that personal computing and smartphones have made in our everyday lives. This was made possible by two megatrends – the widespread digitization of information and the network connectivity to share that information.

Our modern gadgets take digital information that can be processed by computing and converts it into the analog information in nature that we can use, such as sight and sound. When we take a selfie from our smartphone for instance, our camera takes the analog light and color information from our camera lens and turns it into a digital file, which we can then edit or send to our friends.

Likewise, when we download a digital music file and play it, this digital information is turned back into analog sound waves that we can hear. This digital to analog, or nature to digital, conversion happens all the time in our daily lives, but we use this across very short distances. Our PCs, smartphones and tablets are useful to us basically at the distance of our fingertips. Our cameras and microphones are useful across small distances.

We’re now at the start of the next megatrend, which is to digitize the outdoors at a distance and dramatically extend the range of our human senses. An early example of this is the Optical, Radar, and Lidar sensors being used in semi-autonomous car driving systems. These systems take outdoor information captured at a very short distance (about the distance of our headlights) and convert some of that into digital information. Onboard computing in the car then uses that digital information to stay in a driving lane, avoid obstacles, or slow down when needed. There’s no useful network connection yet, so smart vehicles can’t trade information in a practical sense.

MatrixSpace is a company that was formed to address the digitization of the outdoors and to make that information highly useful to people. Creating an understanding of objects and their motion across kilometers of distance requires new sensor technology that doesn’t exist today at deployable price, performance, size, or weight. These smart sensors also become a lot smarter if they can reliably talk to each other across a secure, high bandwidth network. Just as we see much better with two eyes than one, the accuracy of these smart sensors is greatly enhanced when we have more than one sensor looking at the same thing at the same time, and they can talk to each other.

Once a larger area of the outdoors is captured in digital form and then having local Artificial Intelligence process that data and inform us to what is out there – gives us the ability to personalize how we adapt to outdoors spaces in a way that no one is doing today. We’ve invested heavily to bring a new class of AI radar products to market, which combine gracefully with optical and other sensor types to give the range, accuracy, and performance required to digitize the outdoors at a distance.

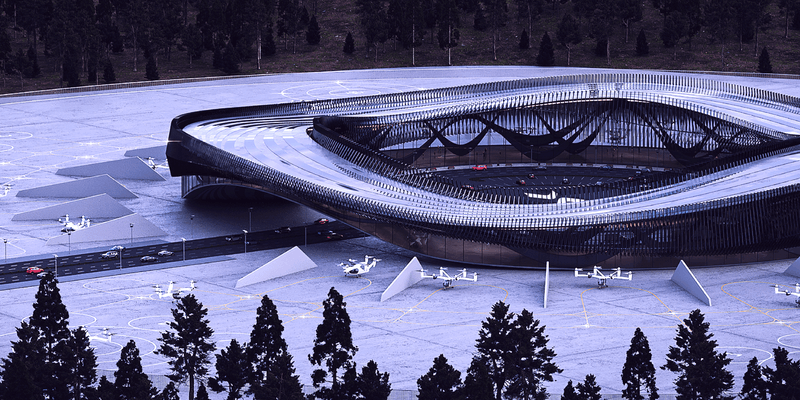

The uses of these capabilities are deep and wide across most major industries. Safety, security, and inspection will be dramatically enhanced using smart sensors on the ground, on vehicles, and on drones. Transportation will be made safer, quicker, and more efficient both on the ground and in low airspace. The rapid rise of intelligent light aircraft – industrial drones, eVTOL’s, and helicopters – will all benefit from having a comprehensive “bubble of awareness” around them that enables safe flight in any weather conditions.

We are just starting this amazing new chapter in how we personalize and make better use of our surroundings. It’s an adventure that we’re excited to be part of, and it’s going to have a profound effect on how we adapt to what’s happening in spaces around us.

Learn more at MatrixSpace Radar, Drone Detection, and AI Sensing.

Keep Reading

The usage of radar for guiding vehicles and protecting people/places is strictly regulated for safety and to ensure reliability across radio spectrums. Your use case dictates how to comply with rules for radionavigation and radiolocation.

Railyards and rail lines face significant challenges daily—from theft, vandalism, and costly derailments. Technology enables securing their perimeters more effectively while also improve asset inspection practices.

We recently announced a joint venture with Skyway to advance the integration of intelligent air traffic management and uncrewed aircraft detection systems. Skyway develops vertiports and provides advanced solutions for vertiport traffic management and unmanned airspace planning. MatrixSpace provides outdoor sensor solutions leveraging radar technology for use in defense and commercial applications, which addresses this need. The companies’ partnership is intended to support several aspects of enabling practical advanced air mobility (AAM) initiatives in the United States.